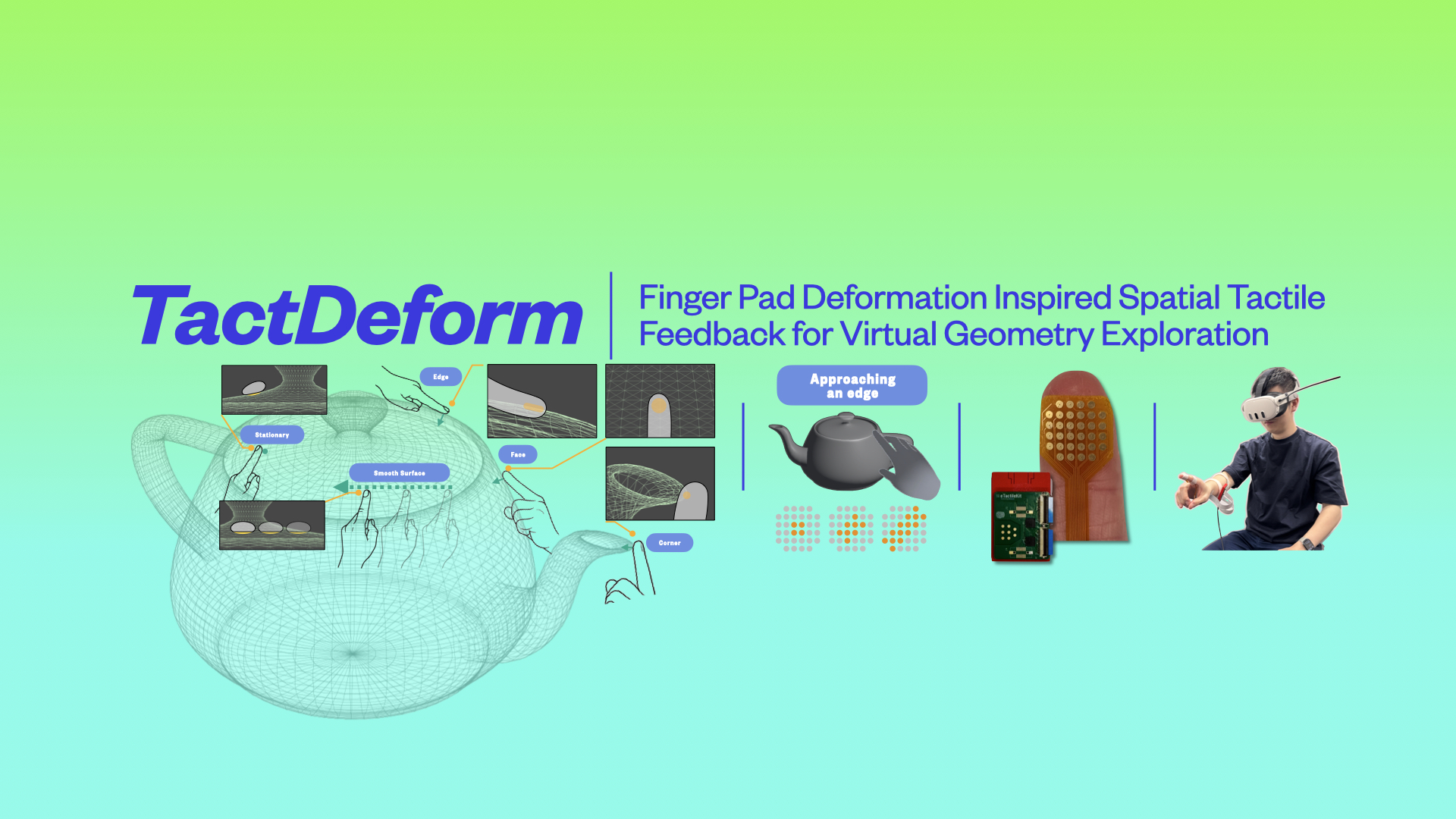

TactDeform: Finger Pad Deformation Inspired Spatial Tactile Feedback for Virtual Geometry Exploration

TactDeform is a parametric approach that renders spatio-temporal electro-tactile patterns inspired by natural finger pad deformations, enabling users to feel geometric features and textures of virtual objects through a lightweight finger-worn interface.

View Project Landing Page →Overview

TactDeform is a parametric approach to rendering spatio-temporal tactile patterns using a finger-worn electro-tactile interface for virtual reality. Inspired by how our finger pads naturally deform when touching real-world 3D objects, TactDeform dynamically adapts electro-tactile stimulation based on both interaction contexts (approaching, contact, sliding) and geometric contexts (features and textures), emulating the deformations that occur during real-world touch exploration.

Vision

When we explore physical objects, our fingertips deform in characteristic, feature-dependent ways — spreading across flat surfaces, concentrating along edges, and pressing into corners. These deformation patterns carry rich spatial information that our mechanoreceptors decode for shape and texture perception. TactDeform brings this natural tactile understanding into virtual reality, enabling users to feel the geometry of virtual objects through lightweight, wearable electro-tactile feedback — without bulky force-feedback hardware.

How It Works

TactDeform uses a dual-context approach to generate parametric spatio-temporal electro-tactile patterns via a 32-electrode finger-worn array:

Interaction Contexts

- Approaching: As the finger approaches a virtual surface, expanding stimulation patterns emulate the progressive deformation of initial contact. The expansion rate is parametrically linked to approach velocity.

- Stationary Contact: During sustained contact, orientation-specific patterns adapt based on the finger's angle relative to the surface, emulating how different finger pad regions experience varying pressure.

- Sliding: When moving across surfaces, dynamic pattern shifts emulate friction-induced deformations, with shift rates modulated by surface roughness and movement velocity.

Geometric Contexts

- Faces: Planar surfaces produce diverging ring patterns expanding from the contact centre.

- Edges: Linear features create directional line patterns extending from the centre.

- Corners: Point features concentrate stimulation at the array centre with parametric falloff.

- Textures: Three roughness levels (smooth, rough, rougher) modulate pattern shift dynamics during sliding.

Evaluation

A user study with 24 participants across two phases validated TactDeform's effectiveness:

- 85.7% accuracy in geometric feature identification (faces, edges, corners)

- 95.8% accuracy in texture discrimination (smooth, rough, rougher)

- 58% of participants spontaneously developed edge-probing exploration strategies

- Significant learning effects observed, with accuracy improving from 75.0% to 89.9% across trials

- Qualitative analysis revealed emergent tactile-guided 3D geometry exploration behaviours

Applications

- Medical Training: Enhancing surgical simulations with spatial tactile feedback for recognising edges, corners, and surface transitions.

- Accessibility: Providing shape communication for visually impaired users navigating XR environments.

- Industrial Design: Supporting virtual prototyping where subtle geometric features carry critical information.

- Interactive Entertainment: Enabling realistic touch exploration of virtual objects and environments.

Open Source

TactDeform is released as an open-source implementation with design guidelines for integrating electro-tactile feedback into VR applications.

Team Members

Related Publications

TactDeform: Finger Pad Deformation Inspired Spatial Tactile Feedback for Virtual Geometry Exploration

Y Dong, PB Perera, CT Lin, CT Jin, A Withana